AI is exposing a weakness that many software teams already had: important project context is usually stored in people, not systems.

That works tolerably well when humans remain the main execution engine. It works much worse when AI agents start drafting plans, writing code, summarizing meetings, and acting on partial instructions. Agents are fast, but they are poor at carrying the full arc of a project across weeks of changes.

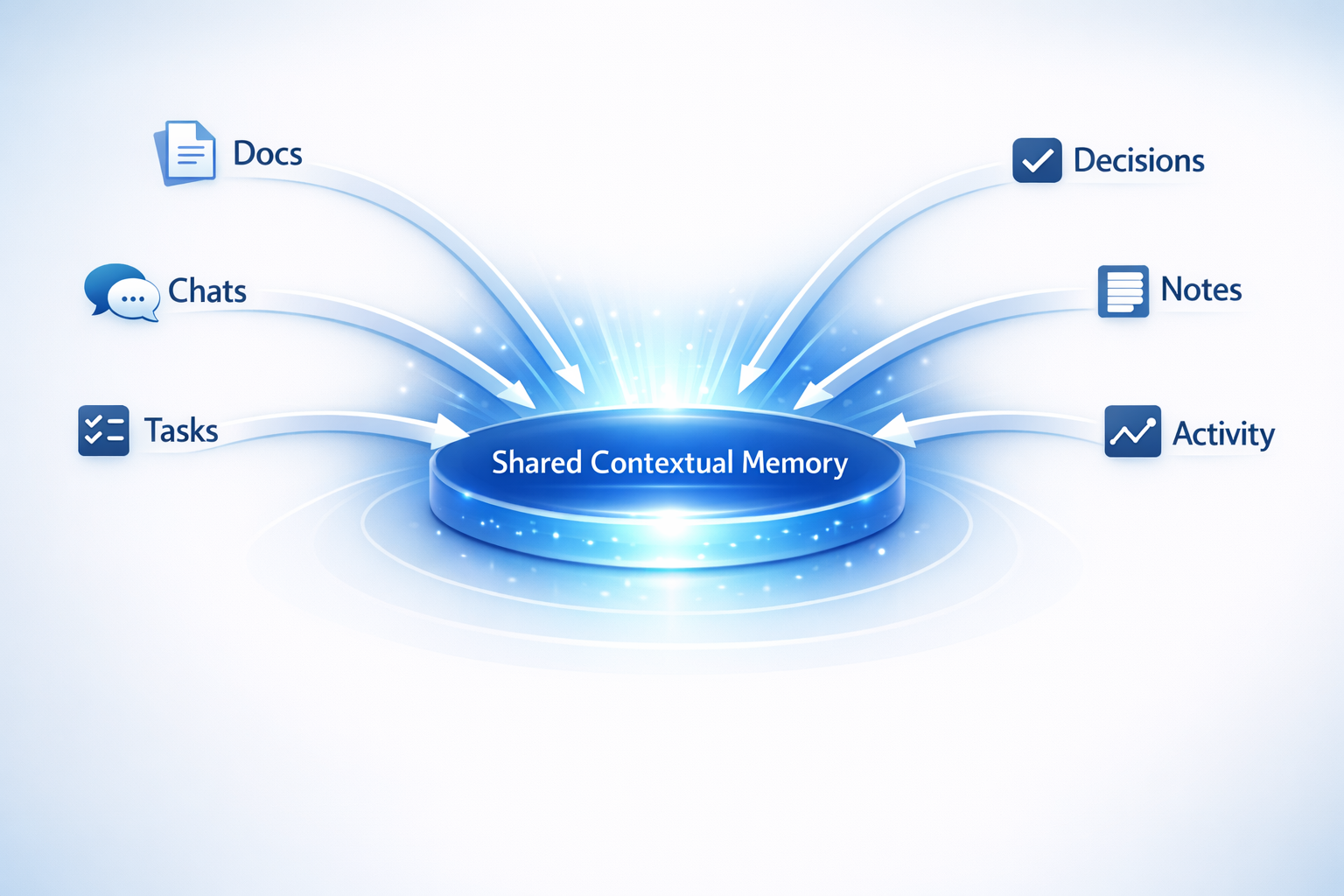

That is the problem Q is built around. The docs frame it as a shared memory and change-control layer rather than another project management surface. The core problem is not task organization. It is maintaining an inspectable, evolving record of project truth.

In practice, that means capturing project signals from multiple channels, converting them into structured memory, and preserving provenance. If a system says a request conflicts with an earlier decision, the team needs to inspect where that conclusion came from. Without that trail, AI outputs become hard to trust.

The technical shape behind this is straightforward but important: a canonical memory store, an asynchronous extraction pipeline, and a retrieval layer designed to reconstruct project state over time.

A quick comparison makes the distinction clearer:

| Capability | Project tracker | Static docs | Memory layer |

|---|---|---|---|

| Provenance to original source | Limited | Manual | Built in |

| Recency handling | Weak | Often stale | Tracks newer states |

| Decision continuity across weeks | Partial | Fragmented | Core function |

| Useful for AI retrieval | Low to medium | Medium | High |

This also changes how teams handle drift. A client asks for one thing, an older decision says another, and implementation moves before anyone notices the mismatch. A memory layer does not eliminate that risk, but it makes the mismatch visible earlier and with better evidence.

One healthy boundary in the docs is that the system is not treated as a magical authority. It remembers, links, and surfaces evidence; humans still govern publication and conflict resolution. In mixed human-agent workflows, that is the right split.

Further reading